The biggest trend these days is the Studio Ghibli trend, where people post their images to ChatGPT, and then the platform applies a Ghibli filter to them. This makes the images look as if they come straight from a Ghibli-animated movie.

Setting aside the very real ethical concerns this raises regarding art and copyright, including the vehement criticism of AI-generated art by the co-founder himself, legendary filmmaker Hayao Miyazaki, who in a 2016 interview stated that it is an “insult to life itself” and that “I am utterly disgusted. If you really want to make creepy stuff, you can go ahead and do it, but I would never wish to incorporate this technology into my work at all,” there are other concerns as well.

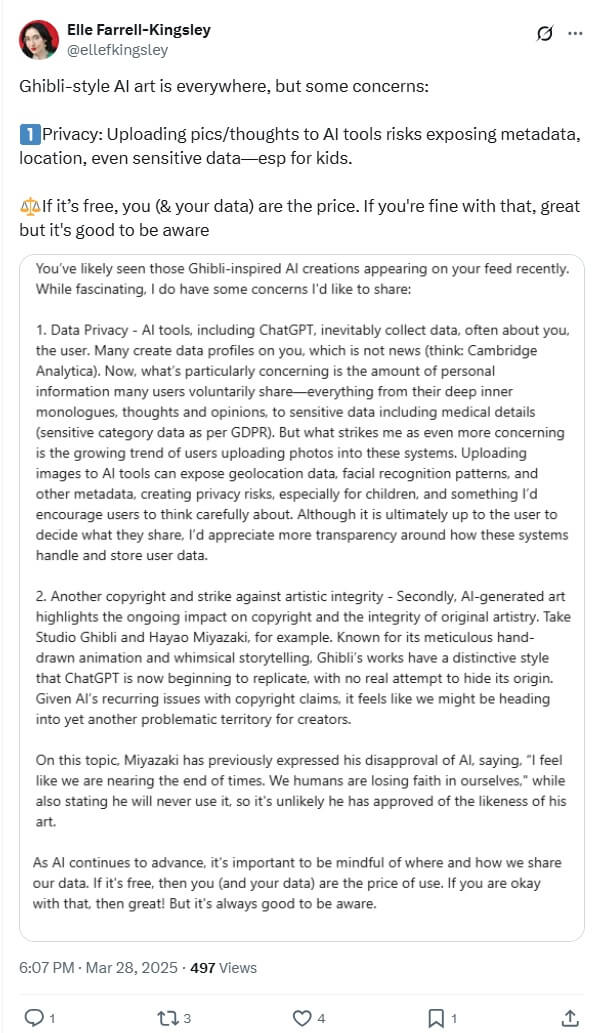

The virality of this trend and how it is being promoted, with even well-known personalities, including celebrities, influencers, and politicians, posting about it has led to significant concerns about privacy and the potential dangers involved.

What Did The AI Analyst Say?

Luiza Jarovsky, co-founder of the AI, Tech & Privacy Academy and an influential voice in AI governance, recently posted on her LinkedIn about the Studio Ghibli trend that has been going viral.

In her post, she began by stating how “most people haven’t realised that the Ghibli Effect is not only an AI copyright controversy but also OpenAI’s PR trick to gain access to thousands of new personal images; here’s how:

To get their own Ghibli (or Sesame Street) version, thousands of people are now voluntarily uploading their faces and personal photos to ChatGPT. As a result, OpenAI is gaining free and easy access to many thousands of new faces to train its AI models.

Some may argue that this is irrelevant because OpenAI could simply scrape the same images from the internet and use them to train its AI models. This is not true for two reasons.”

One reason she provided was that this trend represents a “Privacy ‘Bypass’.” Basically, she alleged that data protection laws from different countries might hinder OpenAI from using personal images from the internet unless they are approved for training its models.

However, people uploading their images to ChatGPT willingly give OpenAI access to recent images, allowing their usage since individuals are providing their consent.

She wrote, “In places like the EU when OpenAI scrapes personal images from the internet, it relies on legitimate interest as a lawful ground to process personal data (Article 6.1.f of the GDPR). As such, it cannot harm individuals or go against their interests; therefore, it must take additional protective measures, including potentially refraining from training its models with these images (see my previous articles on the topic, including Opinion 28/2024). Other data protection laws also specify additional protections in the case of scraped images, particularly for images of minors.”

Read More: OpenAI Whistleblower Suchir Balaji Revealed These 4 Secrets Before His Mysterious Death

However, when individuals voluntarily upload these images, they grant OpenAI consent to process them (Article 6.1.a of the GDPR). This represents a different legal ground that affords OpenAI more freedom, and the legitimate interest balancing test no longer applies.

Moreover, OpenAI’s privacy policy explicitly states that the company collects personal data input by users to train its AI models when users have not opted out (link to opt-out below—check out my newsletter article).

She also explained that this provides a way for OpenAI to access “Fresh New Images.” Essentially, this means that instead of relying on images from the internet that may be old or dated, this trend allows OpenAI to access completely recent images of individuals.

Additionally, many of these images might not already be on social media, making them exclusive.

Jarovsky wrote, “My second argument for why this is a clever privacy trick is that people are uploading new images, including family photos, intimate pictures, and images that likely weren’t on social media before, merely to feel part of the viral trend. OpenAI is gaining free and easy access to these images, and they will only have the originals. Social media platforms and other AI companies will only see the ‘Ghiblified’ version.

Moreover, the trend is ongoing, and people are learning that when they want a fun avatar of themselves, they can simply upload their pictures to ChatGPT. They no longer need third-party providers for that.”

Jarovsky is not the only one cautioning people against this trend. The online privacy and security platform Proton has also discussed the dangers of uploading one’s image to AI tools.

In a post on X (formerly Twitter), the platform wrote, “Think this is a fun trend? Think again. While some don’t have an issue sharing selfies on social media, the trend of creating a ‘Ghibli-style’ image has seen many people feeding OpenAI photos of themselves and their families.”

It further stated, “Aside from the risks of data breaches, once you share personal photos with AI, you lose control over how they are used since those photos are then….”

Image Credits: Google Images

Sources: The Economic Times, Hindustan Times, Moneycontrol

Find the blogger: @chirali_08

This post is tagged under: openai ghibli trend, openai, ghibli trend, ghibli ai, ghibli art style, studio ghibli trend, ghibli ai chatgpt, studio ghibli trend, studio ghibli ai, artificial intelligence, artificial intelligence privacy, chatgpt, chatgpt privacy, user data privacy, OpenAI data collection, privacy concerns AI, AI training data, Miyazaki AI art, viral internet trends, OpenAI, digital privacy advocates

Disclaimer: We do not hold any right, or copyright over any of the images used, these have been taken from Google. In case of credits or removal, the owner may kindly mail us.