Artificial intelligence (AI) is often presented as a triumph of human ingenuity: fast, efficient, and increasingly autonomous. But beneath this polished narrative lies a hidden global workforce performing the most disturbing, repetitive, and psychologically taxing tasks that machines cannot yet do.

This labour is not randomly distributed. It is systematically outsourced to the Global South, where economic vulnerability makes such work both acceptable and invisible.

In India, this system rests heavily on women, particularly those from rural, Dalit, and Adivasi backgrounds, whose labour powers AI systems used largely in wealthier countries. What appears to be technological progress is, in reality, a deeply unequal exchange. Data flows upward, profit flows outward, and trauma stays local.

The Backbone Of AI Is Human

Artificial intelligence depends on human-labelled data. Every “smart” system has first been trained by thousands of workers who manually classify images, videos, and text. According to NASSCOM, in India alone, around 70,000 people were engaged in data annotation by 2021, contributing to a $250 million industry, a figure that has only grown since.

But the geography of value reveals the imbalance: 60% of this revenue comes from the United States, while only 10% originates within India. This means Indian workers are not building domestic systems; they are feeding global platforms. The Global South supplies the labour; the Global North captures the value.

Women form half or more of this workforce, not by accident but by design. As Monsumi Murmu’s experience reveals, this labour is embedded in everyday life, performed from verandas and bedrooms. “I would close my eyes and still see the screen loading,” she said. This captures how global AI systems intrude into the most intimate corners of local lives.

Cheap Labour And Structural Exploitation

Companies deliberately recruit from rural and semi-rural areas, where nearly 80% of workers originate. These regions offer lower wages, weaker labour protections, and a workforce eager for stable income. Women, especially, are seen as ideal workers: compliant, detail-oriented, and willing to accept home-based, contract roles.

This is not empowerment in the traditional sense; it is cost optimisation disguised as inclusion. By keeping women at home, companies avoid infrastructure costs while also reinforcing gender norms that limit mobility and bargaining power. The very factors that make these jobs accessible also make workers easier to exploit.

Researcher Priyam Vadaliya, working on AI and data labour, highlights in her conversation with The Guardian, said, “The work’s respectability and the fact that it arrives at the doorstep as a rare source of paid employment often creates an expectation of gratitude. That expectation can discourage workers from questioning the psychological harm it causes.”

Women are made to feel they should not question the work because it is “respectable” and rare. This expectation silences dissent and normalises conditions that would be unacceptable in more visible, regulated workplaces.

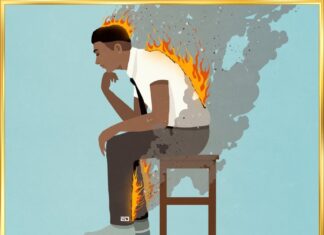

Watching Trauma So Machines Don’t Have To

The core of this labour involves exposure to extreme content, violence, sexual abuse, and exploitation, often at an industrial scale. Workers may review up to 800 pieces of content a day, repeatedly training algorithms to detect what society deems unacceptable.

Studies show this has severe consequences. Research identifies traumatic stress as the most significant psychological risk, with symptoms including anxiety, intrusive thoughts, and sleep disruption. Sociologist Milagros Miceli argues that content moderation should be classified alongside “dangerous work,” comparable to high-risk industries.

Murmu recalls, “The first few months, I couldn’t sleep. I would close my eyes and still see the screen loading.” The Guardian reports that she dreamt of gory images of accidents and sexual violence. Unfortunately, she couldn’t escape.

Monsumi Murmu’s experience reflects this trajectory of harm. “In the end, you don’t feel disturbed, you feel blank,” she said. This emotional numbing is not resilience; it is a coping mechanism. And when the numbness fades, the trauma resurfaces, often long after the work is done.

When Global Labour Reshapes Intimate Lives

For women workers, the effects of this labour extend beyond mental health into deeply personal domains. Continuous exposure to explicit sexual content alters perceptions of intimacy, desire, and safety, areas already shaped by social and cultural expectations.

Raina Singh’s account in The Guardian illustrates this rupture vividly. At first, “It didn’t feel alarming. Just dull. But there was something exciting too. I felt like I was working behind the AI. For my friends, AI was just ChatGPT. I was seeing what makes it work.”

She recounts, “I had never imagined this would be part of the job. The material was graphic and relentless.” When she raised this issue with her manager, she was told, “This is God’s work – you’re keeping children safe.”

“I can’t even count how much porn I was exposed to… the idea of sex started to disgust me,” she said. She described feeling like “a stranger in my own body,” unable to reconcile her emotional needs with the images she was forced to process daily.

This is where the exploitation becomes distinctly gendered. Women are not only performing invisible labour; they are absorbing its consequences in ways that disrupt relationships and identity. The cost of AI is not just economic; it is psychological, social, and deeply embodied.

Also Read: West AI, Robots Continue Stereotyping India With Negative Bias

Politics Of Invisibility

Despite the risks, support systems are minimal. Of the eight companies examined, only two offered psychological care, and even that required workers to actively seek help. This shifts responsibility away from employers and onto individuals who may lack the language or resources to articulate their distress.

At the same time, non-disclosure agreements (NDAs) enforce silence. Workers cannot speak openly about their jobs, isolating them further. For many women, this silence is compounded by social pressures, as revealing the nature of the work could result in losing both employment and social acceptance.

One worker expressed fear that her family would force her to quit if they understood her job. Combined with modest incomes of £260–£330 per month, this creates a trap: workers endure harm because the alternative, unemployment, feels worse. Silence, in this system, is not accidental. It is engineered.

AI’s Intelligence Is Built On Unequal Worlds

Artificial intelligence is often described as the future. But its present is built on deeply unequal global arrangements, where the most advanced technologies depend on the most marginalised workers. In India, that burden falls heavily on women, whose labour remains unseen even as it becomes indispensable.

What we are witnessing is not just technological progress, but a form of digital extraction, where the Global South provides cheap labour, absorbs psychological harm, and receives only a fraction of the value created. Until this imbalance is addressed, AI will remain ethically compromised.

Recognising this reality is the first step. The next is accountability: stronger labour protections, mandatory mental health support, transparent job roles, and global responsibility from the companies that profit most. Because intelligence, no matter how artificial, should not come at the cost of human dignity.

Images: Google Images

Sources: The Guardian, India Today, Siasat

Find the blogger: Katyayani Joshi

This post is tagged under: ai ethics, artificial intelligence, ai industry, global south, digital labor, invisible labour, women workers, women in tech, gender inequality, labour rights, exploitation, tech industry, content moderation, data annotation, human cost of ai, ethical ai, ai governance, platform economy, gig economy, mental health, workplace safety, psychological trauma, india workforce, rural india, women empowerment, dalit voices, adivasi communities, social justice, tech accountability, future of work

Disclaimer: We do not hold any right, copyright over any of the images used, these have been taken from Google. In case of credits or removal, the owner may kindly mail us.

Other Recommendations:

How The West Drains Good Doctors From Developing Nations Like India

How The West Drains Good Doctors From Developing Nations Like India